Gestures

From Openmoko

MinhHaDuong (Talk | contribs) (→Sources: Code moved to own domain) |

(+cat) |

||

| Line 235: | Line 235: | ||

[[Category:System Applications]] | [[Category:System Applications]] | ||

| + | [[Category:Accelerometer applications]] | ||

Revision as of 17:23, 19 July 2009

Gestures is one of the applications that runs on the Openmoko Phones. For a list of all applications, visit Applications

Contents |

Alpha 2 Release (14 Aug 2008) for Neo FreeRunner

Video Demo

Openmoko Neo FreeRunner does Accelerometer-based Gestures, and Screen Orientation

Remark: Please watch the video, so that you'd know what to expect out of the Alpha 2 Release.

Sources

In October 2008, the code moved from the Accelges Google Summer of Code repository to a GIT server located at http://repo.accelsense.org.

Alpha 2 Release: The Quick Way

SSH Neo FreeRunner:

wget http://accelges.googlecode.com/files/accelges_0.1.0-svnr204-r2_armv4t.ipk opkg install accelges_0.1.0-svnr204-r2_armv4t.ipk

On The Neo FreeRunner

/etc/init.d/gesl start

Over SSH you must use

DISPLAY=:0 /etc/init.d/gesl start

(otherwise screen orientation won't work):

You're ready: make a gesture, make Neo change its screen orientation (if you did watch the video, you'll know what to do).

Training: The Quick Way

OPTIONAL: Stop the gesture recognizer, and listener:

/etc/init.d/gesd-neo2 stop /etc/init.d/gesl stop

Run

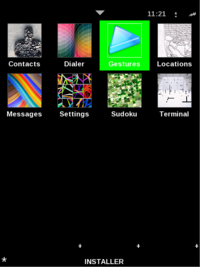

Home > Gestures

Select a gesture, press Train, and follow instructions.

REQUIRED: Start/restart the gesture recognizer, and listener:

/etc/init.d/gesd-neo2 start (from SSH, or from Neo) /etc/init.d/gesl start (only from Neo)

Alpha 2 Release Details

Prerequisites

- You can use any distribution you like; I would suggest using the Om 2008.8 for now. You'll need these dependencies:

dbus, dbus-glib, libxrandr, libnotify, notification-daemon, libcurl, and gtk+

- On the Om 2008.8, you'll be missing:

libnotify, notification-daemon, libsexy2, libwnck-1-18, libstartup-notification-1-0, and libglade-2

opkg install libnotify notification-daemon libsexy2 libwnck-1-18 libstartup-notification-1-0 libglade-2.0-0

Remark: On the 2008.8, your missing dependencies will be downloaded automatically by the installer.

Preparing Om 2008.8 for Gestures

- Turn off Suspend:

Home > Settings > Suspend > off

- Make sure Neo has Web Access (use SSH):

echo "nameserver xxx.xxx.xxx.xxx" > /etc/resolv.conf

- Install Terminal:

Home > Installer > Development > openmoko-terminal2

- Create a shell script that will start the gesture listener daemon (use SSH):

echo "exec /etc/init.d/gesl start" > /usr/bin/gesl-begin chmod +x /usr/bin/gesl-begin

- Restart Neo!

Installing Gestures

- Download the Alpha 2 Release Package, or (use SSH):

echo "nameserver xxx.xxx.xxx.xxx" > /etc/resolv.conf wget http://accelges.googlecode.com/files/accelges_0.1.0-svnr204-r2_armv4t.ipk

- Install:

opkg install accelges_0.1.0-svnr204-r2_armv4t.ipk

Training Gestures

- Launch the Gesture Manager:

Home > Gestures

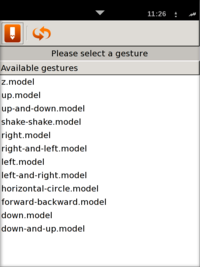

- Select a gesture and click the first icon on the toolbar; Follow the instructions. Do the same for all the gestures!

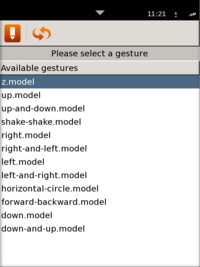

- Step 1: Launch Gesture Manager

- Step 2: This is the Gesture Manager

- Step 3: Select a gesture, and click Train (first icon from the toolbar)

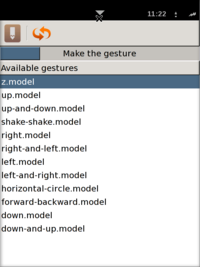

- Step 4: Make the gesture (this step creates the gesture)

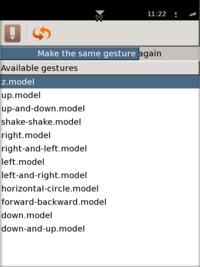

- Step 5: Make the gesture again (this step trains the gesture)

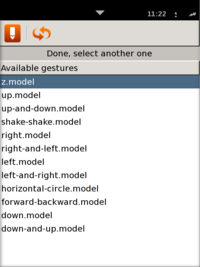

- Step 6: Select another gesture, and do the same

- Step 1: Launch Gesture Manager

- Restart the Gesture Recognizer (use SSH):

/etc/init.d/gesd-neo2 restart

Remark: You have to restart the Gesture Recognizer, so that it will re-read the newly trained gestures. Every time you train one or more gestures, restart the recognizer!

Running Gestures

- Use the script you have created before; be sure to run the script from the Neo, no SSH or VNC:

gesl-begin

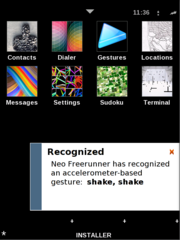

- There are 12 gestures available (once, you make one, a notification will pop up):

Remark: If you don't start the Gesture Listener from the Neo, screen orientation won't work

How To Improve Recognition Accuracy

- Check to see which gesture gets recognized most often. Try training those gestures again.

- Determine which gestures don't get recognized at all. Try training those gestures again.

Alpha 2 Development Details

DBUS

Gesture Recognizer (gesd) sends signals on:

org.openmoko.accelges /org/openmoko/accelges/Recognizer org.openmoko.accelges.Recognizer.Recognized

Try (on FSO distribution)

mdbus -s -l

and make a gesture for more information on DBUS.

Configuration Files

The configuration file for Neo is:

/etc/accelges/neo2/neo2.cfg

# Paul-Valentin Borza <paul@borza.ro> # gestures configuration file for Neo/Wii # classes and probabilities for classifier # sclass\tFILE static acceleration class # dclass\tFILE dynamic acceleration class # sclassp\tVALUE probability for static acceleration class # dclassp\tVALUE probability for dynamic acceleration class sclass s.class dclass d.class sclassp 0.4 dclassp 0.6 # classes for recognizer (add more than one) # class\tid\tFILE static acceleration class class screen_zzp screen_zzp.class class screen_zzn screen_zzn.class class screen_zpz screen_zpz.class class screen_znz screen_znz.class class screen_pzz screen_pzz.class class screen_nzz screen_nzz.class class screen_npp screen_npp.class class screen_nnp screen_nnp.class class screen_pnp screen_pnp.class class screen_ppp screen_ppp.class # models for recognizer (add more than one) # model\tid\tFILE dynamic acceleration model model left left.model model left, and return left-and-right.model model right right.model model right, and return right-and-left.model model up up.model model up, and return up-and-down.model model down down.model model down, and return down-and-up.model model shake, shake shake-shake.model model horizontal circle horizontal-circle.model model forward, backward forward-backward.model model z z.model

Again, if you modify the configuration file, you'll have to restart the gesture recognizer (gesd).

Daemons

Gesture Recognizer that uses the top accelerometer (/dev/input/event2):

/etc/init.d/gesd-neo2 start|stop|restart

Gesture Listener that switches screen orientation, and notifies the user of recognized gestures on the screen:

/etc/init.d/gesl start|stop|restart

Remark: Start the recognizer first, and after that the listener (obvious, but important)

Components/Applications

Try playing with:

gesd (gesture recognizer) gesl (gesture listener) gesm (gesture manager)

Known Issues

- One, or both accelerometers might not work. Try:

hexdump /dev/input/event2 (for top accelerometer) hexdump /dev/input/event3 (for bottom accelerometer)

Remark: The Alpha 2 Release uses the TOP accelerometer

- After a while, the accelerometers might stop working.

Restart your Neo!

- After a while, the accelerometer send bogus values (values that are lower than what they should be). If this happens, the classifier won't be able to classify dynamic/static acceleration, and nothing will work - nor the gesture training, nor the gesture recognition.

Restart your Neo!

Use Cases

- Mute audio or suspend when screen is facing down; - NO SUPPORT FOR MUTE/UNMUTE AUDIO IN NEO FOR NOW

- Go to main menu when shaken; - NO SUPPORT YET

- Volume up/down during call when tilting left/right (still unclear); - NO SUPPORT YET

- Turning the phone face to the user (not the same as taking it to the ear) to turn on the backlight - BACKLIGHT HAS A BUG, CAN'T BE USED YET

- Automatic portrait/landscape switching for the UI - Done

- Turning the phone screen down to mute sound (and probably turn off the backlight) or hold call

- Swinging in an O-shape in the air to redial

- Moving the phone in a firm gesture from one ear to the other to switch between active and held calls

- Scrolling with firm tilts (suggested several times, should see if it's usable)

- Dropping (suggested several times, though it's unclear how to react to it)

- Shaking to get audio feedback (could e.g. imitate balls rolling inside to the number of unread messages, or liquid splashing to incdicate the battery level)

- Starting driving in a car (if that's detectable -- probably has other patterns than walking etc) to switch to some “car mode”

- Stopping e.g. at a traffic light to choose a better time to notify about new messages than while driving

- Taking off in a plane (should be detectable, but hard to train) to shut down all RF systems

- This is a bad idea - you need to do that way before it lifts off the ground

- Similarly, landing to re-enable RF systems

- Similarly, you shouldn't turn them back on until way after the wheels touch the tarmac

- Vigorous shaking (side to side) while receiving a call could reject it.

- A sideways swing (90degres) out of the wrist could mean general Cancel/Esc/Back

- A long swing could close a app (more a arm swing than wrist. Same G-forces but longer time).

- These swing moves could be used on two axis and each in two axis for different usage.

- A firm wrist tilt backside down could mean global OK.

- Maybe some basic moves like these should have absolute global meaning. (like left, right, enter, esc)

- Mute phone my hitting it on something hard three times with one side.

- Face down lying still - lock screen

- Face up lying still - never lock screen

- holding the moko out & angling the front of it up repeatedly turns up volume

- angling front down repeatedly turns down volume

- a set of 5 or 10 standard, easily distinguishable gestures that the user can map to favorite programs

|

Gestures

Gestures is a daemon that interpret measurements from the accelerometers and the movements you make with your FreeRunner. It also switches between portrait and landscape if it notices that your FreeRunner has been rotated.

|